vue实现发送语音消息

实现语音消息的基本流程

在Vue中实现发送语音消息的功能,需要结合浏览器的Web Audio API和录音功能。以下是关键步骤:

-

获取用户麦克风权限

使用navigator.mediaDevices.getUserMedia请求麦克风访问权限:const stream = await navigator.mediaDevices.getUserMedia({ audio: true }); -

初始化录音器

创建MediaRecorder实例并配置音频格式:const mediaRecorder = new MediaRecorder(stream, { mimeType: 'audio/webm' }); -

处理录音数据

收集录音片段并合并为Blob对象:let audioChunks = []; mediaRecorder.ondataavailable = (e) => { audioChunks.push(e.data); };

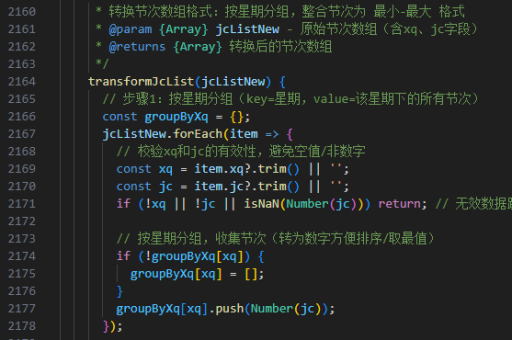

前端界面实现

创建Vue组件控制录音流程:

<template>

<div>

<button @mousedown="startRecording" @mouseup="stopRecording">

按住说话

</button>

<audio v-if="audioUrl" :src="audioUrl" controls></audio>

<button @click="sendAudio">发送</button>

</div>

</template>

<script>

export default {

data() {

return {

mediaRecorder: null,

audioChunks: [],

audioUrl: ''

}

},

methods: {

async startRecording() {

const stream = await navigator.mediaDevices.getUserMedia({ audio: true });

this.mediaRecorder = new MediaRecorder(stream);

this.mediaRecorder.start();

this.mediaRecorder.ondataavailable = (e) => {

this.audioChunks.push(e.data);

};

},

stopRecording() {

this.mediaRecorder.stop();

this.mediaRecorder.onstop = () => {

const audioBlob = new Blob(this.audioChunks);

this.audioUrl = URL.createObjectURL(audioBlob);

};

},

sendAudio() {

const audioBlob = new Blob(this.audioChunks);

const formData = new FormData();

formData.append('audio', audioBlob, 'recording.webm');

// 发送到服务器

axios.post('/api/upload-audio', formData)

.then(response => {

console.log('发送成功', response);

});

}

}

}

</script>服务器端处理

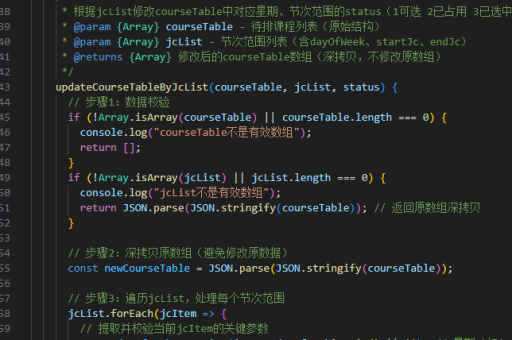

Node.js示例接收音频文件:

const express = require('express');

const multer = require('multer');

const upload = multer({ dest: 'uploads/' });

app.post('/api/upload-audio', upload.single('audio'), (req, res) => {

console.log('收到音频文件:', req.file);

res.json({ status: 'success' });

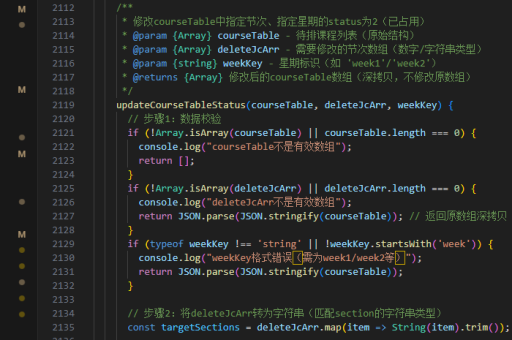

});兼容性处理

-

格式转换

考虑将WebM格式转换为更通用的MP3格式,可使用ffmpeg.js在浏览器端转换:import { createFFmpeg } from '@ffmpeg/ffmpeg'; const ffmpeg = createFFmpeg({ log: true }); async function convertToMp3(webmBlob) { await ffmpeg.load(); ffmpeg.FS('writeFile', 'input.webm', await fetchFile(webmBlob)); await ffmpeg.run('-i', 'input.webm', 'output.mp3'); return ffmpeg.FS('readFile', 'output.mp3'); } -

移动端适配

添加触摸事件支持:<button @touchstart="startRecording" @touchend="stopRecording" > 按住说话 </button>

优化用户体验

-

添加可视化

使用AudioContext实现录音波形显示:const audioContext = new AudioContext(); const analyser = audioContext.createAnalyser(); const source = audioContext.createMediaStreamSource(stream); source.connect(analyser); function drawWaveform() { requestAnimationFrame(drawWaveform); const bufferLength = analyser.frequencyBinCount; const dataArray = new Uint8Array(bufferLength); analyser.getByteTimeDomainData(dataArray); // 使用canvas绘制波形 } -

录音时长限制

添加自动停止逻辑:

let recordingTimer; startRecording() { // ...原有代码 recordingTimer = setTimeout(() => { this.stopRecording(); }, 60000); // 60秒后自动停止 }

以上实现方案涵盖了从录音到发送的完整流程,可根据实际需求调整音频格式、服务器接口等细节。