java如何用hadoop

使用Hadoop处理数据的Java实现

Hadoop是一个开源的分布式计算框架,主要用于处理大规模数据集。Java是Hadoop的主要开发语言,以下是使用Java与Hadoop交互的关键步骤。

配置Hadoop环境

确保Hadoop已正确安装并配置环境变量。在$HADOOP_HOME/etc/hadoop目录下,检查core-site.xml和hdfs-site.xml文件是否包含正确的HDFS配置。例如:

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://localhost:9000</value>

</property>

</configuration>编写MapReduce程序

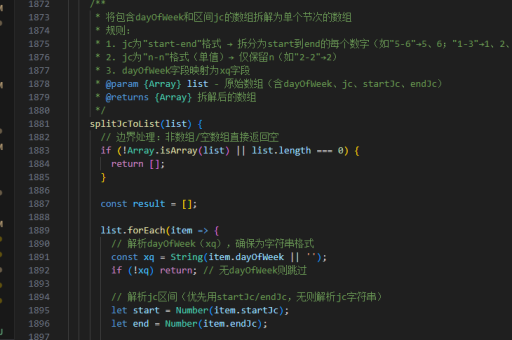

创建一个Java类实现MapReduce逻辑。需要继承Mapper和Reducer类,并重写map和reduce方法。以下是一个简单的WordCount示例:

public class WordCount {

public static class TokenizerMapper extends Mapper<Object, Text, Text, IntWritable> {

private final static IntWritable one = new IntWritable(1);

private Text word = new Text();

public void map(Object key, Text value, Context context) throws IOException, InterruptedException {

StringTokenizer itr = new StringTokenizer(value.toString());

while (itr.hasMoreTokens()) {

word.set(itr.nextToken());

context.write(word, one);

}

}

}

public static class IntSumReducer extends Reducer<Text, IntWritable, Text, IntWritable> {

private IntWritable result = new IntWritable();

public void reduce(Text key, Iterable<IntWritable> values, Context context) throws IOException, InterruptedException {

int sum = 0;

for (IntWritable val : values) {

sum += val.get();

}

result.set(sum);

context.write(key, result);

}

}

}打包并提交作业

使用Maven或Gradle将项目打包成JAR文件。在命令行中提交作业到Hadoop集群:

hadoop jar wordcount.jar WordCount input_path output_path监控作业状态

通过Hadoop的Web界面(默认端口8088)或命令行工具监控作业执行状态:

yarn application -list读取HDFS数据

使用Hadoop的Java API读取或写入HDFS文件。以下是一个读取文件的示例:

Configuration conf = new Configuration();

FileSystem fs = FileSystem.get(conf);

Path path = new Path("hdfs://path/to/file");

FSDataInputStream inputStream = fs.open(path);

BufferedReader reader = new BufferedReader(new InputStreamReader(inputStream));

String line;

while ((line = reader.readLine()) != null) {

System.out.println(line);

}

reader.close();优化MapReduce性能

调整MapReduce作业的配置参数以提高性能,例如设置合适的mapreduce.task.io.sort.mb和mapreduce.reduce.shuffle.input.buffer.percent。在mapred-site.xml中配置:

<property>

<name>mapreduce.task.io.sort.mb</name>

<value>200</value>

</property>异常处理与调试

捕获并处理Hadoop作业中的异常,使用日志工具(如Log4j)记录详细日志。在log4j.properties中配置日志级别:

log4j.logger.org.apache.hadoop=DEBUG使用Hadoop Streaming

对于非Java语言的支持,可以通过Hadoop Streaming API运行脚本。提交作业时指定-mapper和-reducer参数:

hadoop jar $HADOOP_HOME/share/hadoop/tools/lib/hadoop-streaming-*.jar \

-input input_path \

-output output_path \

-mapper mapper_script \

-reducer reducer_script